Table of Contents

- 3:1 Rule

- Ambient

- Amplitude

- Attack

- Audio Interface

- Balanced/Unbalanced Cable

- Bandwidth

- Bit Depth

- Bit Rate

- Cardioid Microphone

- Channel

- Clipping

- Compression

- Condenser Microphone

- DAW

- Decay

- Decibel

- Delay

- Distortion

- Dynamic Microphone

- Dynamic Range

- Envelope

- Equalization (EQ)

- Feedback

- Foley

- Frequency

- Gain

- Generational Loss

- Graphic Equalizer

- Ground

- Headroom

- Hertz

- Instrument Level

- Leakage

- Limiter

- Line Level

- Mastering

- Mic Level

- MIDI

- Mix

- Mono

- Omnidirectional Mic

- Oscillator

- Overdub

- Panning

- Passive Speaker

- Phantom Power

- Phase

- Preamp

- RCA Connector

- Release

- Reverberation

- Ribbon Mic

- Sample Rate

- Sound Wave

- Stereo

- Sustain

- Timbre

- Track

- Transient

- TRS

- TS

- Waveform

- XLR

3:1 Rule

Microphones that are recording in close proximity to one another are subject to phase issues. To avoid phase problems, separate the microphones by a distance that is at least three times the distance of the mics to the source (3:1). For example, if a mic is two feet away from the sound source, then no mic should be closer than six feet from the first mic. This is an approximate rule.

Ambient

Within the field of sound, “ambient” has a number of different usages. It can refer to etherial atmospheric music, the pervasive background noise of a location, or the “room sound” of a space.

Ambient music often favors softer tones and droning sounds over traditional song structures or rhythmic frameworks.

Ambient sound is the background sound or base noise level within a space. When recording in a forst area, the ambient sound may be the breeze, rustling leaves, birdsong, and a jet flying overhead. In an indoor environment, the ambient sound may the the HVAC system, the buzzing of fluorescent bulbs, and whirring distant machinery.

Amplitude

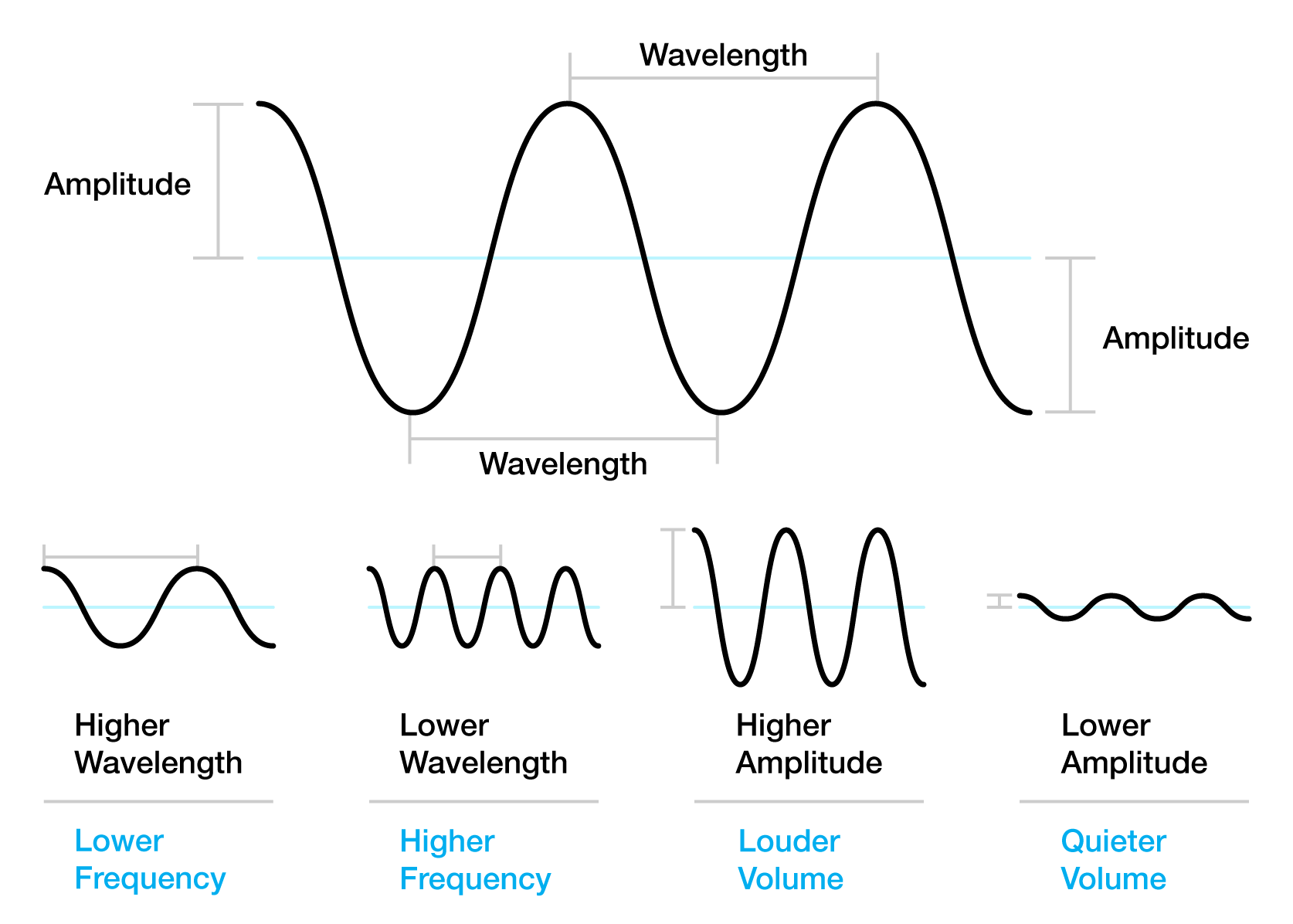

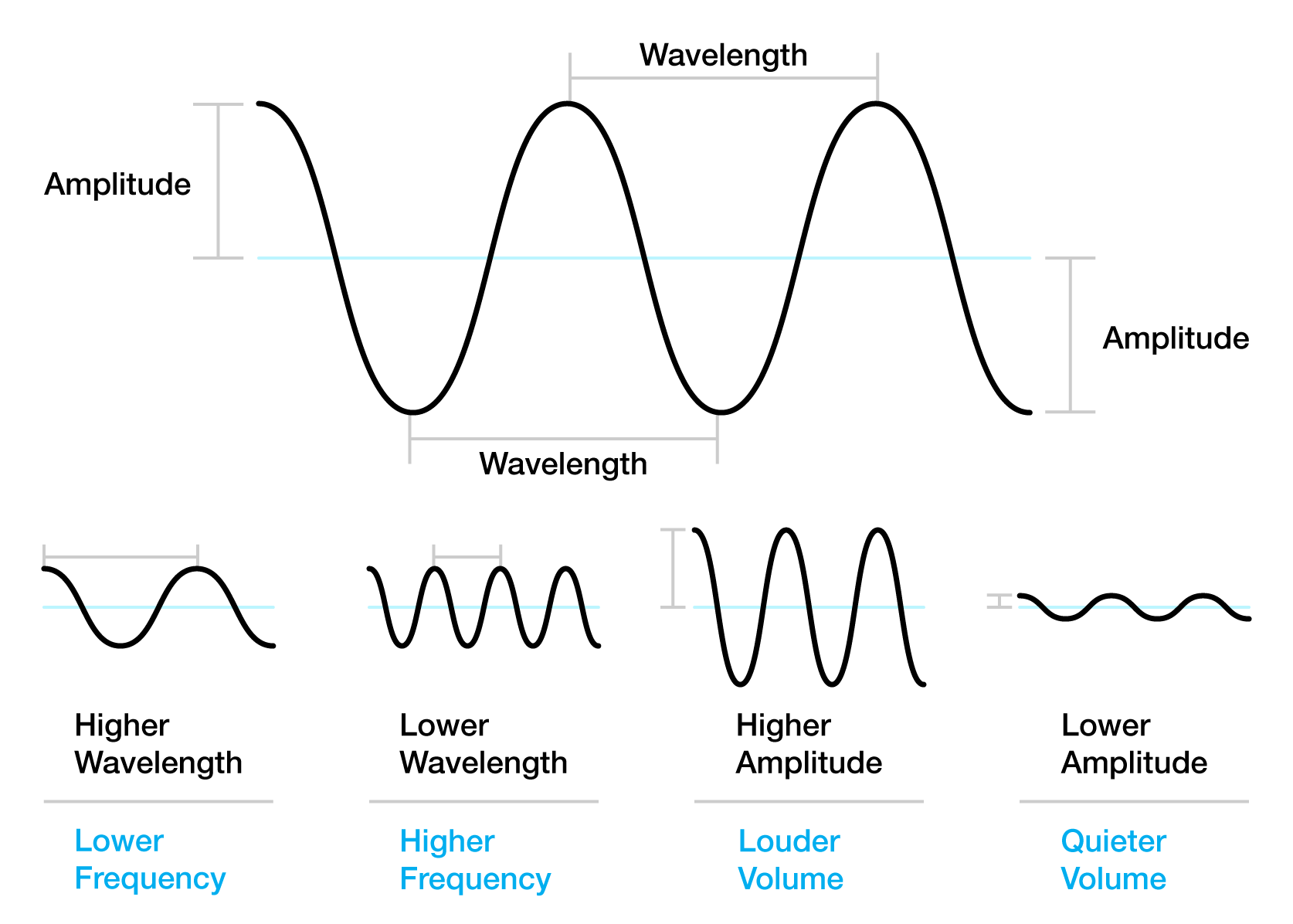

Sound is created by the displacement of particles (typically air, but sound can travel through water and solids) as waves. Amplitude is the peak displacement of these particles caused by a sound wave. The three primary properties used to describe waves are amplitude, wavelength, and frequency. The larger the amplitude, the more intense and loud the sound is. Amplitude is measured in decibels (dB).

Diagram indicating a sine waveform with its amplitude and wavelength labeled.

Attack

Attack can be thought of as the beginning of a sound (wave). A sound with an immediate attack will be heard immediately—like a snare drum being struck. A sound with a slow attack will swell in volume—like a violin being slowly bowed with increasing intensity. Different audio effects often have an “attack” setting indicating how the effect is impacting the beginning of the sound. For example, compression with a slow attack will not take effect immediately at the beginning of a sound. Instead, it will increase in intensity over time, slowly limiting the amplitude of the waveform.

“Intro To Synthesis Part 4: Exploring Attack, Decay, Sustain & Release,” posted February 26, 2018, by Reverb, YouTube, 06:54, https://youtu.be/9SMi47AEnSo.

Audio Interface

An audio interface is a piece of hardware that is the digital bridge between an audio device—microphone, instrument, etc.—and your digital audio workspace (DAW), converting the electronic signal from your device into digital code that can be recorded and manipulated in a DAW. These will generally have one or more inputs for 1/4″ instrument cables or XLR cables, headphone output, and a USB port to connect to your computer. In addition, these typically have a built-in preamplifier to boost the mic-level and instrument-level signals to a usable level.

“Watch this BEFORE you buy an audio interface,” posted January 8, 2025, by Sam Wimer, YouTube, 03:03, https://youtu.be/V5KXL_67iZY.

Balanced/Unbalanced Cable

Unbalanced audio cables have two wires—a conductor wire and a ground wire. The conductor wire carries the audio signal, where the ground wire functions as a reference point for the signal. These cables are susceptible to interference—electronic noise entering the signal, like radio stations, or just white noise. Unbalanced cables generally take the form of TS cables.

Balanced audio cables have three wires—two conductor wires and a ground wire. The second conductor wire carries the same audio signal, but inverted, so if it picks up a noise, when the wire reaches its destination, the signal is re-inverted (so it is identical to the first signal), causing any noise that entered the circuit to cancel itself out. This leads to generally cleaner audio from balanced cables. Balanced cables generally take the form of TRS or XLR cables.

“Balanced vs. Unbalanced Audio Cables,” posted on July 31, 2017, by BoxCast, YouTube, 03:18, https://youtu.be/g7Pd6NdgX80.

Bandwidth

Bandwidth is the range of frequencies in an audio signal, measured in Hertz (Hz). Bandwidth controls are generally broken into treble, bass, and midrange frequencies.

Bit Depth

Bit depth refers to the amont of information (measured in bits) exists in each audio sample. This is a direct correlation to the resolution of each sample. Compact Disc digital audio uses 16 bits per sample, whereas DVD-Audio and Blu-ray supports 24 bits per sample. When recording audio, the bit-depth capabilities of your device indicates the dynamic range it can capture without clipping. Bit-depth in recording also indicates how far above the noise floor your recording sits. So, hdevices with higher bit-depth capabilities allow for the recording of louder sounds without distortion, and also a lower noise floor.

- 16-bit: ~96dB of dynamic range

- 24-bit: ~144dB of dynamic range

- 32-bit float: ~1680dB of dynamic range

Considerig that a rock concert would be around 125dB, and gunshots and fireworks are about 140dB, 32-bit float allows for the recording of very loud sounds.

Bit Rate

In digital audio recording, bit rate refers to the quantity of data transferred during a given period of time (normally one second). This is typically expressed in terms of kb/s (kilo bits per second) or Mb/s (mega bits per second). For example, the bit rate of a standard CD is 1411.2 kilobits/second (2 channels x 16 bits per sample x 44,100 Hz sampling frequency). MP3 bit rates range from 128kb/s to 320kb/s, while a Dolby Digital 5.1 surround soundtrack on a DVD might range between 384 and 448kb/s. (See Sample Rate). The higher the bit rate, the higher the quality of the audio.

Cardioid Microphone

Cardioid refers to the microphone’s polar pattern, that is, the directionality of how a mic picks up sound. Cardioid is the most common polar pattern, named because the diagram of the polar pattern looks like an upside-down heart. Cardioid microphones capture sound directly in front of the microphone’s capsule, and reject sound immediately behind it. These types of microphones are often used to record or amplify vocals since it will capture the sounds directly in front of the microphone while ignoring the sounds behind it. They are also used to record instruments—aimed at the sound hole of an electric guitar, or near the speaker cone of a guitar amplifier—and in field recording since they can focus on near sounds while not capturing much of the background noise.

“How Do Microphone Polar Patterns Work? | Cardioid, Supercardioid, Omni, Figure-8, & More,” posted April 7, 2022, by Audio University, YouTube, 10:02, https://youtu.be/qV6mxPpqTv0.

Channel

In audio recording, the number of channels indicate the number of separate and unique audio tracks that are embedded in the recording material and can be independently extracted to different speakers. For example, a stereo recording consists of a left and a right channel, and is often referred to as a two-channel recording. A Dolby Atmos recording has a total of twelve channels and is labelled as 7.1.4. This naming/numbering convention indicates “seven ear-level channels around the listener, one LFE (low frequency effects) channel, and four overhead channels.”1

- “Dolby Atmos Documentation,” Dolby.com, accessed August 21, 2025, https://professional.dolby.com/gaming/gaming-getting-started/dolby-atmos-documentation/

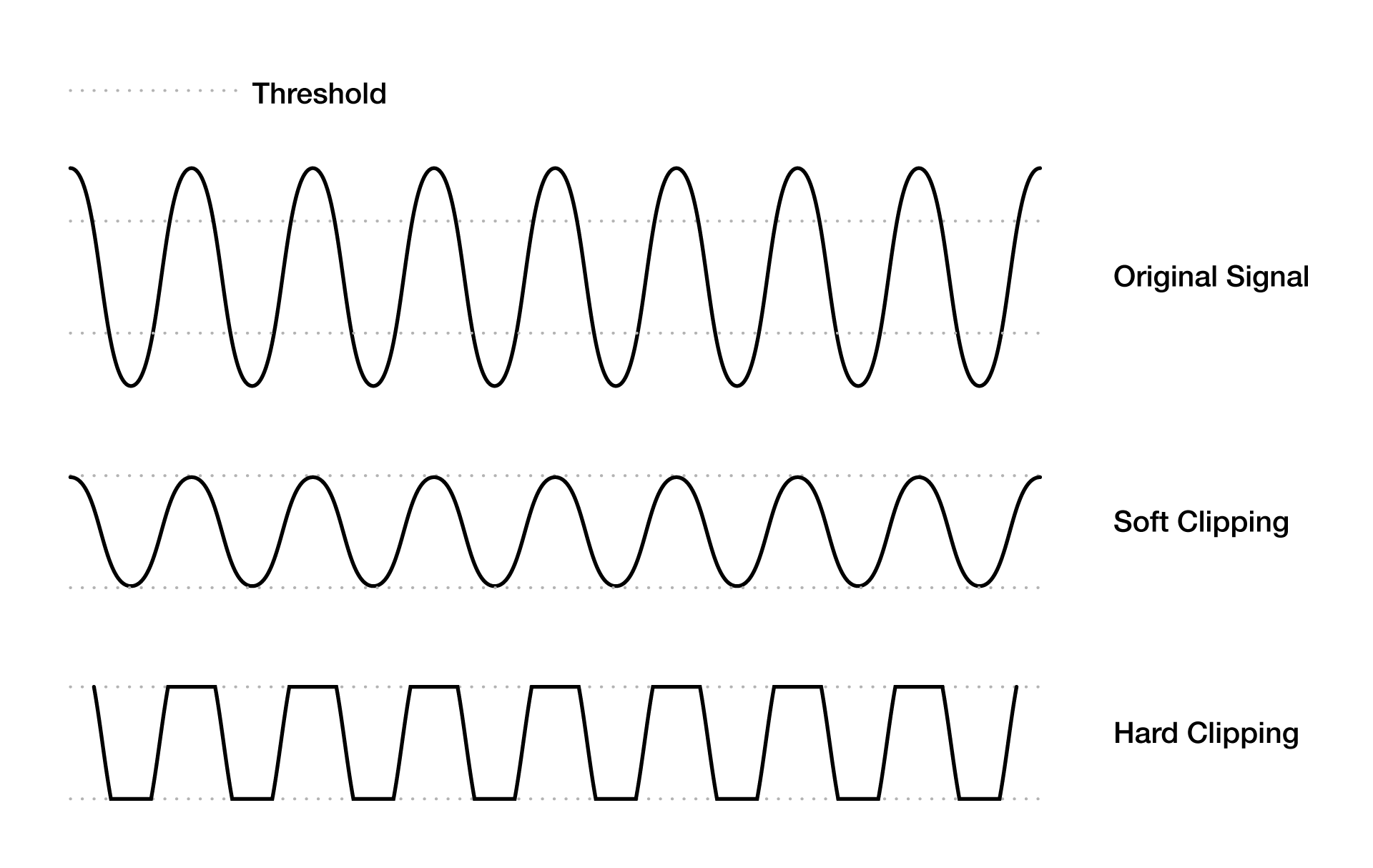

Clipping

Clipping is a non-linear form of distortion that produces harmonic frequencies by cutting off the peaks of audio signals. This typically happens in the amplifier when it’s input signal larger than the amplifier’s capabilities, so the signal is clipped. Hard clipping just lops off the peaks of the waveform resulting in significant distortion—think of heavy metal guitar sounds. Soft clipping instantaneously applies a waveshaping function (so the attack is immediate) to soften the clipped edges of the waveform so the distortion is limited. Soft clippig and compression achieve similar results, with different processes.

Illustration of soft and hard clipping of a waveform.

Compression

The reduction of a span of amplitudes done for the purpose of limiting the reproduction of those amplitudes. A compressor—which is a form of a limiter—examines the amplitude of the input signal and derives and applies a gain factor to lessen the amplitude, making the louder parts quieter and the quiter parts easier to hear as a result. Tracking the amplitude is a time-based process, meaning that the attack is a bit slower and can be adjusted.

“What is Audio Compression? How to Use a Compressor | LANDR Mix Tips #8,” posted August 16, 2018, by LANDR, YouTube, 04:59, https://youtu.be/4uHilAANFfc5QNwl.

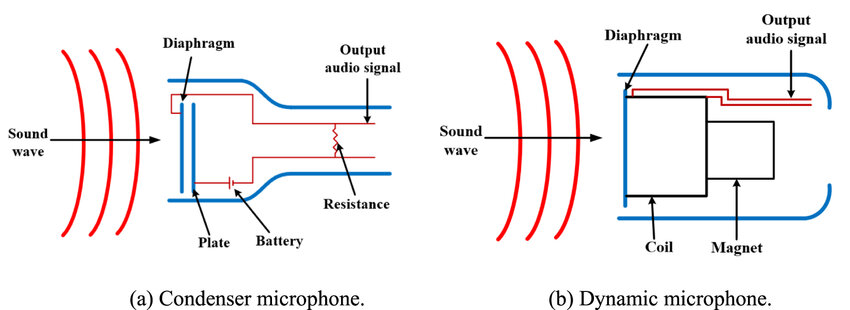

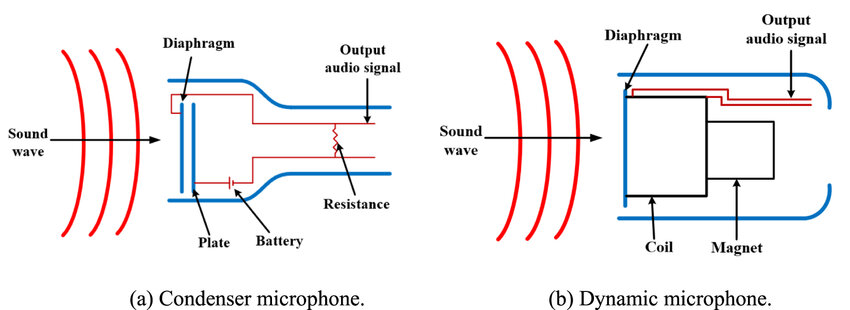

Condenser Microphone

There are two main types of microphones: dynamic and condenser. Condenser (or capacitor) microphones work according to variable capacitance. Sound waves vibrate the diaphragm that has a magnetic plate behind it. This movement of the plate generates a voltage boost that is then increased by a phantom power supply (+48V) or battery, creating a changing electrical output that is sent along a cable to a processor or amplifier.

Source: Sheng-Jun Ao, Yijia Sun, Song-Mei Yuan, Xu-hui Liu, Chun-yang Zhao, Hu Gong, “An ultrasonic pressure field measurement system for ultrasonic-assisted machining based on 6-axis industrial robot,” Measurement Science and Technology 35. 10.1088/1361-6501/ad7750.

DAW

DAW stands for “digital audio workspace.” This is a digital/computer-based interface that is used to record and manipulate audio as digital signals. This is in contrast to analog consoles where sounds recorded to magnetic tape, or other analog medium, are manipulated using analog equipment and effects. Examples of DAWS include Audacity, Audition, Garageband, Logic, Ableton, and more.

Decay

A sound’s envelope includes the attack, decay, sustain, and release (ADSR). The decay is what occurs after the attack. So, once the initial attack shapes the transient of the sound (how the sound’s volume initially increases), then the sound will naturally decrease in volume—decay. The level of decay indicates how quickly, over time, the sound decreases. The sustain level indicates what volume level the sound decreases to during the decay.

“Intro To Synthesis Part 4: Exploring Attack, Decay, Sustain & Release,” posted February 26, 2018, by Reverb, YouTube, 06:54, https://youtu.be/9SMi47AEnSo&t=122.

Decibel

Decibels are the unit of measurement of sound pressure level (SPL). As a measurement unit, the decibel (dB) system can be confusing because it is not based on absolute values, but rather the ratio between two signal levels—power and power level. Therefore, the decibel is a logarithmic scale that indicates the relative change in sound pressure levels. Doubling the electrical power generating a sound will only yield a 3 dB increase. To achieve a doubling of perceived loudness requires the power to be increased tenfold (yielding an additional 10 dB). To give you an idea of the scale, the lower threshold of human hearing is 0 dB at 1kHz; speaking voices are generally under 60 dB; a vacuum cleaner might measure around 70 dB; loud factories might be about 85 dB and require hearing protection; a rock concert may be around 125 dB. Anything greater than 192 dB might kill you.

The term “decibel” is actually named for Alexander Graham Bell whose lab first used transmission unit (TU) to quantify the audio level reduction over one mile of telephone cable. Later TU was renamed to bel (B) to honor Bell and his lab. 1 dB is 1/10 of a B. 10 dB = 1 B.

“Decibels as Fast As Possible,” posted September 14, 2024, by Techquickie, YouTube, 05:19, https://youtu.be/WZLQoP6CM0k.

Delay

Think of delay like an echo. When a delay effect is used, the input signal is recorded and then is replayed back after a period of time, sometimes repeatedly. The replayed signal is sometimes mixed with itself and send back through the signal creating a feedback loop that keeps merging and amplifying. Originally, this was accomplished on tape recordings, where there was a distance between the record head of the machine, and the playback head. So the sound would be recorded, then there was a slight delay before that section of magnetic tape reached the play head. If the live sound was mixed with the playback sound, there would be an echo. By changing the distance between the record and play heads, or by slowing down or speeding up the tape, the distance between the echos could be increased or decreased resulting in longer or shorter delays.

“This Affordable Tape Echo Plays Like An Instrument,” posted February 16, 2023, by Hainbach, YouTube, 09:49, https://youtu.be/pWV5_IrF0s8&t=3.

Distortion

Any change to the reproduction of a waveform is referred to as distortion. However, most commonly distortion refers to what happens to a sound when hard clipping is applied. Think of distorted guitars in rock music. That is achieved by pushing the amplitude of the signal higher than the amplifier and/or speaker’s capacity to faithfully pass along the signal, so it the sound gets clipped and distorted.

“What Is Distortion?,” posted September 23, 2021, by Sweetwater, YouTube, 03:05, https://youtu.be/VvOxWDQIc7M&t=50.

Dynamic Microphone

There are two main types of microphones: dynamic and condenser. Dynamic mics utilize electromagnetic induction. As a sound wave moves through the air, it vibrates the microphone’s diaphragm thereby pushing an electrical conductor inside a magnetic field to generate voltage that is sent along a cable to a signal processor or amplifier. This is how speakers work, but in reverse. For speakers, voltage is passed along the cable, exciting the magnetic field, which moves the conductor and the attached diaphragm (speaker cone), which then moves the air. Moving coil (where a coil of wire acts as the conductor attached to the diaphragm) and ribbon mics are types of dynamic microphones.

Unlike condenser mics, dynamic mics do not need external power in the form of batteries or phantom power supplies. They do not require much maintenance and are fairly rugged.

Source: Sheng-Jun Ao, Yijia Sun, Song-Mei Yuan, Xu-hui Liu, Chun-yang Zhao, Hu Gong, “An ultrasonic pressure field measurement system for ultrasonic-assisted machining based on 6-axis industrial robot,” Measurement Science and Technology 35. 10.1088/1361-6501/ad7750.

Dynamic Range

This refers to the range of sound intensity a system can handle without clipping or compressing the signal.

Envelope

The envelope is how a sound changes over time—this includes its attack, decay, sustain, and release (ADSR).

Equalization (EQ)

Equalization (abbreviated EQ) is the adjustment of timbre, or tone quality, achieved by boosting or attenuating the amplitude of a signal at different frequencies. Sometimes EQ is used as a verb—”I still need to EQ that audio track.” In this case, it indicates that the track’s tone needs to be adjusted by altering certain frequencies such as treble, mids, and bass.

For reference, the following generally indicates how low, mid, and high frequencies are categorized:

- Low = 20Hz–300Hz

- Sub Bass: 20Hz – 40Hz

- Low End: 40Hz – 160Hz

- Upper Low End: 160Hz – 300Hz

- Mid = 300Hz–5kHz (kilohertz)

- Low Mids: 300Hz–800kHz

- Mids: 800Hz–2.5kHz

- High Mids: 2.5kHz–5kHz

- High = 5kHz–20kHz

- High End = 5kHz – 10kHz

- Ultra High = 10kHz – 20kHz

Feedback

Feedback occurs when a sound loop exists between an audio input (a microphone, for example), and an output (a PA speaker). The sound goes into the microphone, comes out of the speaker, which then is picked up by the microphone and sent through the speaker again, which in turn is picked up by the microphone, and so on. That is why in live amplified sound situations, it is best to keep the speakers pointed away from the microphones, or locate the speakers in front of the microphone and orient the speakers away from the mic, and the mic away from the speakers. This is also why cardioid mics are preferred in these situations since they are less likely to pick up sound behind the diaphragm (where the speakers are often located). Jimi Hendrix was known for employing feedback by placing his guitar pickups (the magnetic coils that would pick up the vibrations of the metal guitar strings) toward his speaker which would vibrate the strings, and those vibrations would go through the pickups back into the speaker, and create an audio loop.

Foley

These are sound effects produced typically for film, but also for radio and other audio-only experiences. Foley artists are the individuals who create the sounds, often from unconventional materials.

“The Magic of Making Sound,” posted January 12, 2017, by Great Big Story, YouTube, 06:32, https://youtu.be/UO3N_PRIgX0.

Frequency

Frequency is the measurement between wavelengths of a sound wave and is expressed as cycles per second, or Hertz. The pitch of a sound is determined by the frequency—the higher/faster the frequency, the higher the pitch. Human hearing falls within the 20 hz–20,000 hz frequency range.

For reference, the following generally indicates how low, mid, and high frequencies are categorized:

- Low = 20Hz–300Hz

- Sub Bass: 20Hz – 40Hz

- Low End: 40Hz – 160Hz

- Upper Low End: 160Hz – 300Hz

- Mid = 300Hz–5kHz (kilohertz)

- Low Mids: 300Hz–800kHz

- Mids: 800Hz–2.5kHz

- High Mids: 2.5kHz–5kHz

- High = 5kHz–20kHz

- High End = 5kHz – 10kHz

- Ultra High = 10kHz – 20kHz

Diagram indicating a sine waveform with its amplitude and wavelength labeled.

Gain

The amount a circuit amplifies a signal—expressed in decibels (dB). Adding gain can boost the signal beyond the capabilities of the amplifier or speaker resulting in clipping or distortion.

Generational Loss

As subsequent copies are made, the signal becomes progressively more compressed and/or degraded. Each new generation is a lesser copy than the origianl. This is more prominent in analog rerecording than in digital, although when a digital audio signal is compressed to achieve a lower file size, there is a certain loss of fidelity, so each subsequent copy that is saved and compressed will result in distorted frequencies and digital artifacts.

Graphic Equalizer

An equalizer where the frequency spectrum is broken into segments/bands that can be controlled by an interface—typically sliding faders. The range of human hearing falls within 20Hz–20,000Hz, so most graphic equalizers divide up those frequencies into 10 bands (octaves), 15 bands (2/3 octaves), or 30 bands (1/3 octaves). The “graphic” part of the name refers to the fact that the interface provides a visual representation of the EQ curve.

For reference, the following generally indicates how low, mid, and high frequencies are categorized:

- Low = 20Hz–300Hz

- Sub Bass: 20Hz – 40Hz

- Low End: 40Hz – 160Hz

- Upper Low End: 160Hz – 300Hz

- Mid = 300Hz–5kHz (kilohertz)

- Low Mids: 300Hz–800kHz

- Mids: 800Hz–2.5kHz

- High Mids: 2.5kHz–5kHz

- High = 5kHz–20kHz

- High End = 5kHz – 10kHz

- Ultra High = 10kHz – 20kHz

Ground

Ground (also called earth), in electrical engineering, refers to the point from which voltages in a circuit are measured; the path in which electrical current returns; or a phyical connection to the actual ground/earth to make a circuit less likely to cause electrical shock, less likely to build-up of static electricity, and provides a path for electricit that allows for ciircuit breakers to trip to avoid overlaod and fire hazards.

When dealing with electronic audio equipment like electric instruments or microphones, having them plugged into grouned circuits is important to avoid being shocked. Typically, electrical equpment whose power source has three prongs (in the US) are gounded. the third prong is referred to as the ground.

Headroom

Based on an amplifier’s power supply, the amp may be able to temporarily go beyond its rated power to briefly represet amplitudes withouth hard clipping. So, rather than having an amplitude ceiling that cuts off the tops of the waveforms, the forms can temprarily extend beyond the ceiling to produce a clean, rather than distorted, signal. Thus, the signal is given more “headroom.”

Hertz

This is a measurement of the frequency of sound during a second—so within one second, the number of wavelengths that occur during that second is measured. One hertz is one cycle per second. Commnly, instruments tune their A above middle C, or A4 to 440hz which is called A440. The hertz is named for the German physicist H.R. Hertz.

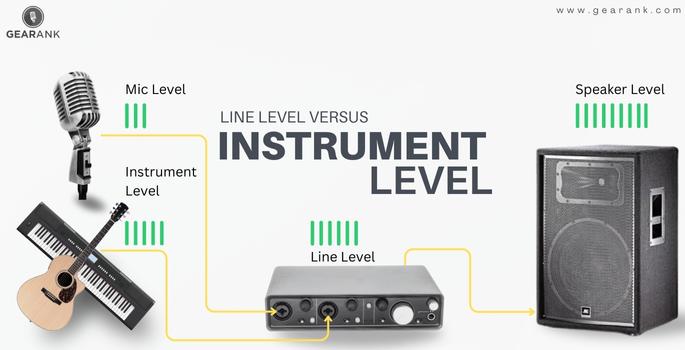

Instrument Level

Instrument level refers to the electrical signal level produced by electric musical instruments (between -30dB to -10 dB), such as electric guitars and and bass guitars. Due to the signal’s high impedance, it does not require much voltage to push the signal to a usable level. An instrument level signal requires a preamplifier, power amp, or audio interface to boost the level signal to line level..

A diagram of mic level, instrument level, line level, and speaker level audio signals.

Leakage

When attempting to record isolated sounds, background sounds are picked up by the microphone. This is called “leakage.” This can be seen as bad if the targeted sound needs to be in complete isolation, or it can be used to interesting effect when recording multiple isolated sounds within a space, so the leakages that occurs on each of the tracks helps to generate a sense of space.

Limiter

A limiter automatically adds compression briefly to restrict the range of an audio signal, preventing high level transients whose ratio is larger than 10:1.

Line Level

An audio signal level that travels from the preamplifier to the amplifier. It’s signal is is about -10dBV for consumer equipment like MP3 and DVD players (+4dBu for professional equipment like mixing consoles) and is around one volt (about 1,000 times stronger than a mic-level signal).

A diagram of mic level, instrument level, line level, and speaker level audio signals.

Mastering

The mastering process is where the audio is equialized and its dynamics evened out to produce a consistent character to ensure a consistent sound character and to optimise playback on the widest possible range of sound systems. Appropriate signal processing may also be applied to make the mastered material suitable for its intended medium (such as controlling transient peaks and dynamics and mono-ing the bass for vinyl records, etc).

Mic Level

An audio signal level that travels from the microphone to the pre-amplifier. It’s signal is is about thousandths of a volt (0.001V) up to tenths of a volt (0.1V). These are the weakest of the audio signal levels. As such, they require pre-amplification before the signal reaches the amplifieer.

A diagram of mic level, instrument level, line level, and speaker level audio signals.

MIDI

MIDI (Musical Instrument Digital Interface) is a protocol that allows computers, synthesizers, and other capable electronics to communicate. It allows devices to share information like tempo, dynamics, pitches, and more.

“The Basics of Mixing in Ableton Live | Part 22/25 | Erin Barra,” posted May 4, 2020, by Berklee Online, YouTube, 12:09, https://youtu.be/kqmV40d4ZGc.

Mix

Mixing is the process of combining multiple sounds/tracks into audio channels. A mixing engineer is seeking to balance dynamics, frequencies, and panoramic positioning within the track and across multiple audio tracks in the case of music albums, or TV/film.

“The Basics of Mixing in Ableton Live | Part 22/25 | Erin Barra,” posted May 4, 2020, by Berklee Online, YouTube, 12:09, https://youtu.be/kqmV40d4ZGc.

Mono

This is short for “monaural,” which refers to a single audio channel, rather than “stereo,” which refers to two audio channels (typically left and right).

Omnidirectional Mic

A microphone whose polar pattern is equally sensitivity in all directions around the capsule. These are used when you want to capture all the sound in a given space. These microphones tend to be condenser mics, which are more sensitive than dynamic mics and produce weaker signals, thereby requiring a boost through phantom power.

Oscillator

A circuit designed to generate a periodic electrical waveform (sine wave, square wave or a triangle wave). An audio oscillator produces frequencies in the 20 Hz to 20 kHz range (the range of human hearing). A low-frequency oscillator (LFO) generates frequencies below 20 Hz. Since an LFO operates below human hearing, it is often used to control other factors of audible signals such as phase, volume, panning, filter frequency, and more. For example, when an LFO is routed to control the amplitude of another audio signal, it can produce a tremolo effect where the volume of the signal pulses. When the LFO controls pitch, then the signal warbles up and down in pitch, creating a vibrato effect. When an LFO modulates amplitude (volume), it creates tremolo.

Overdub

Overdubbing is the process of recording additional tracks to an existing audio track, while listening to the original. This is in contrast to live recording when all sounds are happening simultaneously and recorded simultaneously. An example of overdubbing is adding foley sounds to a film soundtrack while simultaneously listening to a recording of the underlying music.

Panning

This is the process of moving sounds within a stereo field into different channels to locate the audio in different areas of a virtual space. For example, panning a vocal track to the left channel of a stereo field makes it sound as if it is coming from the left of the listener. In a seven-channel audio setup, audio can be panned to a rear channel to make it sound as if it is coming from behind the listener.

Passive Speaker

This is a speaker that requires an external power amplifier. A powered speaker has an internal amplifier.

Phantom Power

A method of feeding necessary power to a condenser microphone through the microphone cable.

Phase

Phase is the time relationship between two waveforms. If there are two waveforms that are identical, and they are occurring simultaneously, the peaks and valleys of that waveform amplify each other, increasing the amplitude. This is called constructive interference. If we delay one of the waveforms so that the oppose each other—as one waveform moves up, the other waveform moves down—they cancel each other out. This is called destructive interference. We could delay the second signal just slightly, so the parts of the waveform experience destructive interference, while others experience constructive interference, thereby distorting the sound.

Essentially, this is how active noise canceling headphones work. They have a microphone that recieves surround sounds, then it generates a waveform that is opposite to the surrounding sound, thereby cancelling it.

“The Basics of Mixing in Ableton Live | Part 22/25 | Erin Barra,” posted May 4, 2020, by Berklee Online, YouTube, 12:09, https://youtu.be/KRWhf_L_xYU&t=19.

Preamp

This is the abbreviated form of “preamplifier.” A preamp is a device that boosts weak audio signal voltages, such as those from microphones or instruments, to a nominal line level that can be used by power amplifiers.

RCA Connector

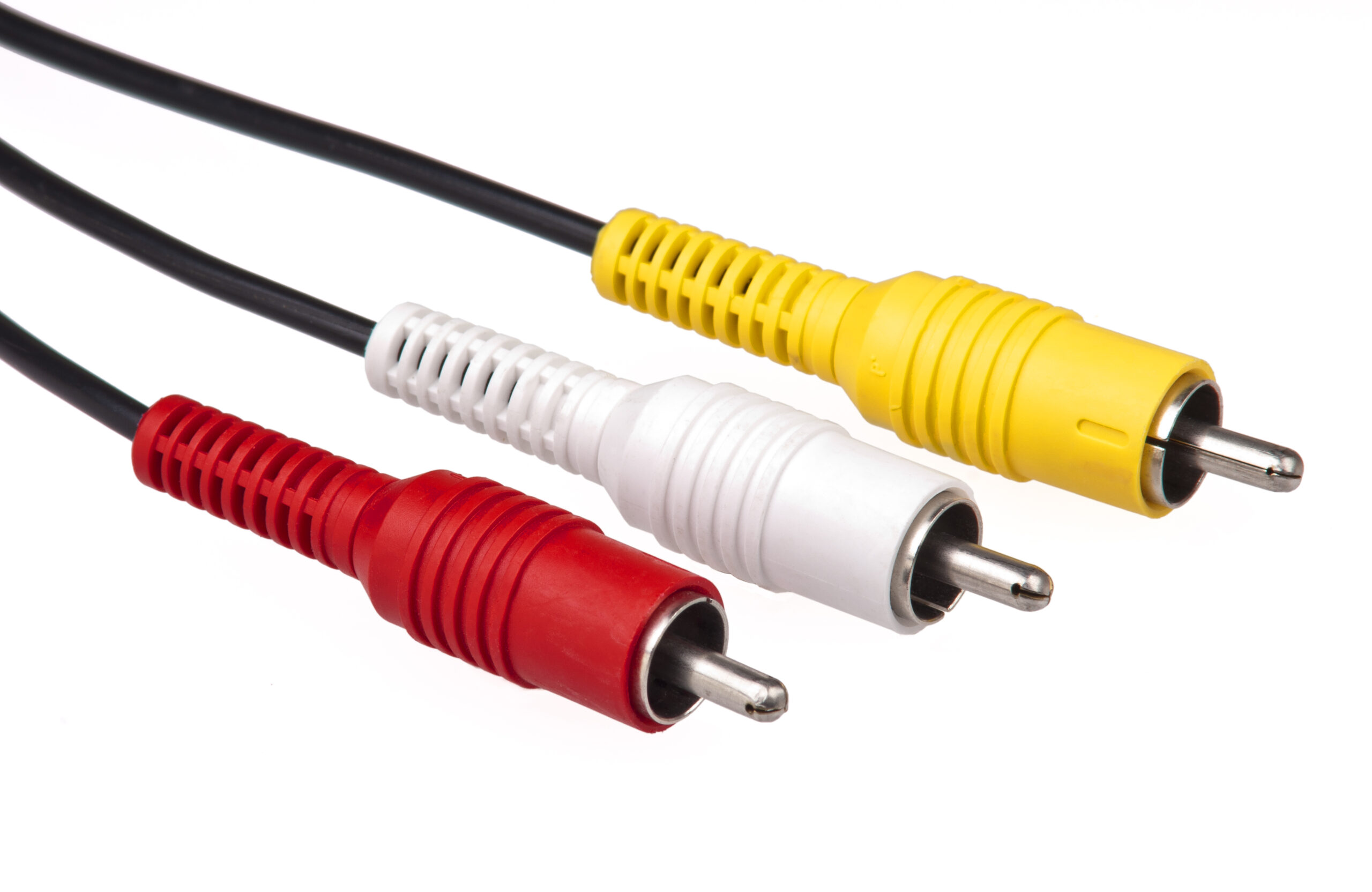

This is a type of connector that carries electrical signals. Introduced by the Radio Corporation of America (RCA) in 1930, this type of connector was originally used for audio, with the red connector for the right audio channel, and white/black for the left channel. It has been adapted to include composite and component video as well, with additional cables/connectors for each new signal. RCA cables that carry audio and composite video have red (right audio), white/black (left audio), and yellow (video) connectors.

RCA composite cable (image source: Wikipedia)

Release

A sound’s envelope includes the attack, decay, sustain, and release (ADSR). After a sound is initiated, the attack shapes how the sound swells in. The decay indicates how long the sound remains after the initial attack. Release indicates the volume level that the sound drops to during the decay. So, if you are playing a keyboard and press and hold a key, the sound will swell in over time (attack), then drop off over time (decay) to a volume level controlled by the release. This only applies if the key remains depressed.

“Intro To Synthesis Part 4: Exploring Attack, Decay, Sustain & Release,” posted February 26, 2018, by Reverb, YouTube, 06:54, https://youtu.be/9SMi47AEnSo&t=248.

Reverberation

Sometimes shortened to “reverb,” reverberation is the reflection of sound in a space that continues after the initial sound has stopped. This continual reflection off of surfaces makes the individual reflections indistinguishable, and the result is interpreted as a slow decay of the sound. Think of the low rumble of thunder after the initial lightning. The amount of reverberation is dependent on the surfaces within and the size of the space. A concert hall will reverberate differently than a small bedroom which will reverberate differently than the Grand Canyon.

Ribbon Mic

A dynamic microphone whose diaphragm/transducer is a thin metal ribbon (typically aluminum) suspended in a magnetic field. When sound causes the ribbon to vibrate, a small electrical current is generated within the ribbon. These are notoriously delicate microphones due to the thinness of the ribbons which are often 50 times thinner than a human hair. Most ribbon mics are bi-directional, meaining they have a figure-8 polar pattern—capturing sound well to the left and right (or forward and backward).

“Ribbon Mics: How Do They Work?,” posted April 29, 2016, by Sweetwater, YouTube, 04:36, https://youtu.be/_59lfbPbpXk?start=5.

Sample Rate

Sample rate (also called sampling frequency) is the number of times an audio signal is sampled as it is converted from analog to digital (it does not apply to analog to analog conversions). Along with bit depth, sample rate helps to determine the resolution of audio. Consider a video camera that takes individual frames multiple times a second. Typically, a video camera will shoot somewhere between 24 to 30 frames per second. So, a video shot at 5 frames per second will feel jumpy and disjointed. When sampling audio, devices snatch segments of audio tens of thousands of times a second. The more samples, the higher the fidelity of the sound. Sample rates are expressed in hertz (samples per second). Common sample rates include 44.1 kHz ()compact discs), 48 kHz (video and film), and 96 kHz (high-resolution audio).

“What Sample Rate Should You Use?,” posted November 28, 2022, by Help Me Devvon, YouTube, 08:04, https://youtu.be/0xBdiEcxL3s?t=6.

Sound Wave

A sound wave is an oscillating disturbance of a medium (air, water, or other matter) that propogates away from the source. The disturbed particles jostle the adjacent particles which in turn disturb their neighbors, creating a longitudinal wave effect.

“Sound Waves In Action | Waves | Physics | FuseSchool,” posted July 7, 2020, by FuseSchool – Global Education, YouTube, 04:18, TgJKf3G6LuE?t=28.

Stereo

Two related audio channels which can mimic a virtual sound space when listened to on a pair of headphones or speakers. Stereo signals are meant to recreate the binaural experience of humans with two functioning ears.

Sustain

The sustain level indicates what volume level the sound decreases to during the decay. If you are playing a keyboard, and your sustain is at zero, and you press and hold a key, the sound will occur (shaped by the attack), then it will decrease (and the time it decreases is the decay level), and then there will be no sound, because there is no sustain (zero volume). If the sustain is turned up, then once you press the key, after the attack and decay, if you continue to hold the key, the sound will remain at a volume level set by the sustain as long as you continue to hold the key.

“Intro To Synthesis Part 4: Exploring Attack, Decay, Sustain & Release,” posted February 26, 2018, by Reverb, YouTube, 06:54, https://youtu.be/9SMi47AEnSo&t=173.

Timbre

The characteristic tone or “color” of asound. It is this unique blend of harmonics that allows us to differentiate between musical instruments that are all playing the same note. A benjo sounds different than an oboe, for example.

Track

A track is an isolated sound recording which can be mixed with other tracks to create more layered sounds.

Transient

This is the initial, high-amplitude sound at the beginning of a waveform—often associated with the sound generated when a musical instruments’ waveform begins.

TRS

This refers to the tip, ring, and sleeve on the end of a 1/8 in. (3.5mm) or a 1/4 in (6.35mm) audio connector. These can carry balanced or unbalanced signals and mono or stereo signals. TRS cables most commonly are used for stereo signals, since TS cables can only carry mono signals.

“Intro To Synthesis Part 4: Exploring Attack, Decay, Sustain & Release,” posted February 26, 2018, by Reverb, YouTube, 06:54, https://youtu.be/m4hy63fEgA0.

TS

This refers to the tip and sleeve on the end of a 1/8 in. (3.5mm) or a 1/4 in (6.35mm) audio connector. These can carry balanced or unbalanced signals and mono signals. TS cables are incapable of carying stereo signals.

“Intro To Synthesis Part 4: Exploring Attack, Decay, Sustain & Release,” posted February 26, 2018, by Reverb, YouTube, 06:54, https://youtu.be/m4hy63fEgA0.

Waveform

This is an abstracted graphic representation of a sound wave’s shape over time.

XLR

XLR cables carry balanced signals and are capable of providing 48 Volt phantom power to microphones. The name comes from the Cannon corporation that pioneered the technology. Cannon took their X model connector and added a latch (L) to prevent it from being disconnected easily. The “R” references the rubber that encases the female contacts. Much like a TRS connector which has two signals and a ground, XLR connectors have three pins: positive, negative, and ground.

“What is XLR?,” posted April 28, 2021, by LEWITT, YouTube, 05:22, https://youtu.be/ZaHgyJ8v_jc?t=18.